Sandboxes on Kubernetes: isolation options and how to run them in production

Most enterprises already run Kubernetes. When they need to run AI agents that execute untrusted code, the question is not whether to use Kubernetes; it is how to add the isolation, lifecycle management, and security controls that standard Kubernetes primitives do not provide out of the box.

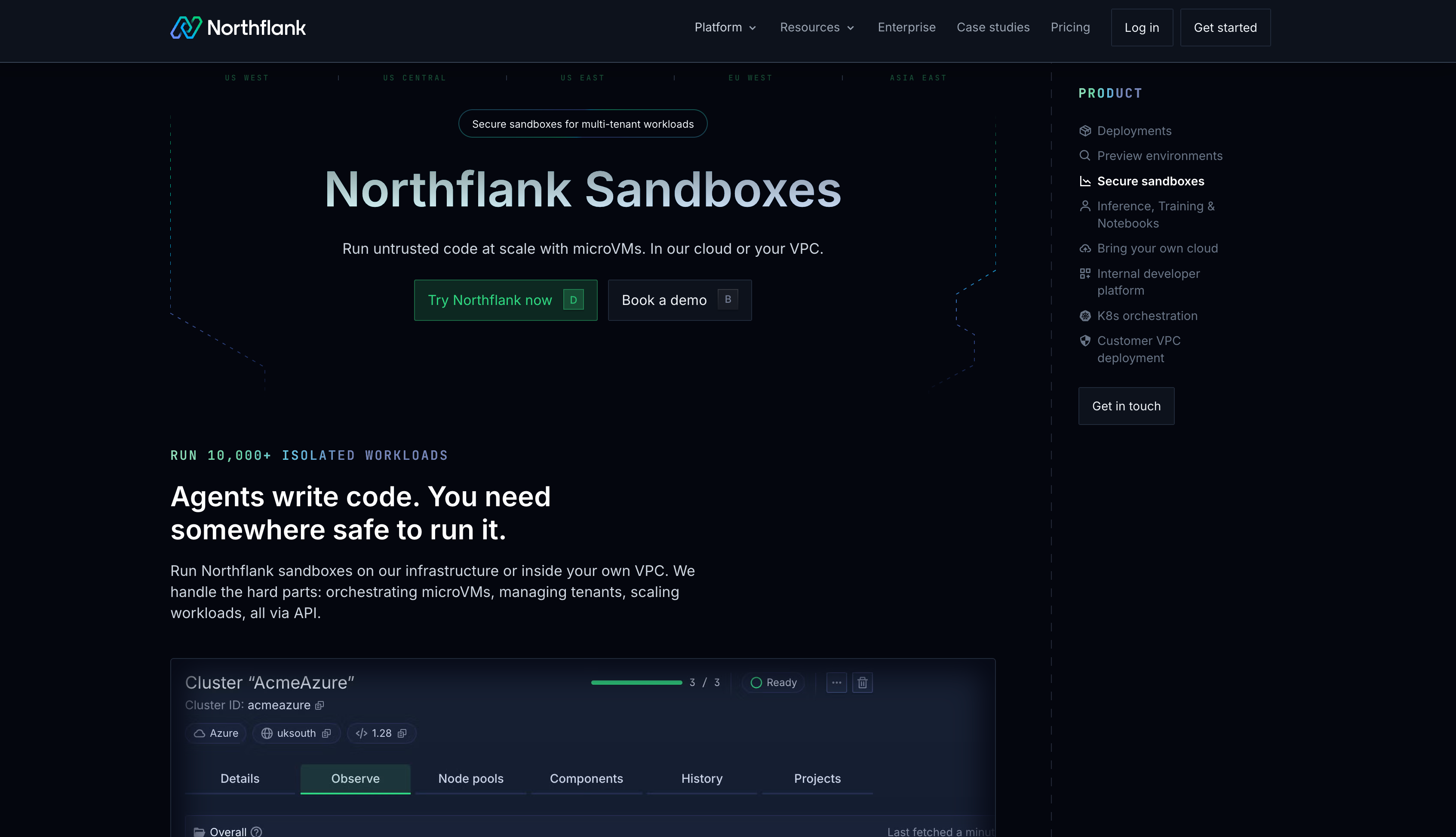

This article covers why running sandboxes on Kubernetes requires more than a Pod manifest, what options exist today, and how Northflank provides production-grade sandbox infrastructure on top of Kubernetes without the operational overhead of building it yourself.

- Standard Kubernetes containers share the host kernel. For untrusted code execution, this is not sufficient. You need Kata Containers or gVisor applied via RuntimeClass.

- Raw Kubernetes primitives (Deployments, StatefulSets, Pods) do not map cleanly to AI agent workload patterns: stateful, singleton, idle-heavy, with lifecycle controls like pause and resume.

- The Kubernetes Agent Sandbox project (kubernetes-sigs/agent-sandbox) is a new CRD and controller that fills this gap with a declarative API for isolated, stateful, singleton workloads.

- Northflank provides production-grade sandbox infrastructure built on Kubernetes, with Kata Containers, Firecracker, and gVisor isolation, managed orchestration, and self-serve BYOC into your existing AWS, GCP, Azure, or on-premises Kubernetes clusters.

What is Northflank? Northflank is a full-stack cloud platform that runs production-grade sandbox infrastructure on Kubernetes. If your enterprise already runs on Kubernetes and needs AI agent isolation without building the stack yourself, that is exactly what Northflank provides. Kata Containers, Firecracker, gVisor, managed orchestration, BYOC into your own cluster, and a full-stack control plane including databases and GPU workloads. Sign up to get started or book a demo.

Standard Kubernetes containers share the host kernel. Every container on a node issues system calls to the same Linux kernel. A kernel vulnerability in one container can affect the host and every other container on the same node. For trusted internal workloads where you control what code runs, this is acceptable. For AI agent workloads where the agent generates and executes code at runtime, it is not.

AI agents also do not map cleanly to existing Kubernetes workload types. A Deployment manages replicated, stateless pods. A StatefulSet manages numbered, stable pods in a set. An AI agent runtime is typically a singleton: one isolated environment per user session or task, mostly idle, needing persistent state, a stable identity, and the ability to pause and resume without losing context. Approximating this with a StatefulSet of size 1 plus a headless Service plus a PersistentVolumeClaim works at a small scale but becomes an operational problem at hundreds or thousands of concurrent agents.

Kubernetes supports multiple container runtimes via the Container Runtime Interface (CRI). By configuring a RuntimeClass, you can run pods with different isolation backends without changing your application code or manifests.

| Runtime | Isolation model | How it works on Kubernetes | Best for |

|---|---|---|---|

| Standard containers (runc) | OS-level (namespaces, cgroups) | Default runtime, shared host kernel | Trusted internal workloads |

| gVisor (runsc) | Syscall interception (user-space kernel) | RuntimeClass gvisor, intercepts syscalls before they reach the host kernel | Moderate-trust workloads, lower overhead than microVMs |

| Kata Containers | Hardware-level (KVM hypervisor) | RuntimeClass kata, each pod runs in its own microVM with a dedicated kernel | Untrusted code, multi-tenant AI agents |

| Firecracker via Kata | Hardware-level (KVM hypervisor) | Kata with Firecracker VMM backend, faster startup and lower overhead than QEMU | Production AI sandboxes at scale |

For multi-tenant AI agent workloads where agents execute LLM-generated code, Kata Containers or Firecracker is the right default. gVisor is appropriate when full VM overhead is not justified, and the threat model does not require hardware kernel isolation.

The Agent Sandbox project (kubernetes-sigs/agent-sandbox) is an open-source Kubernetes controller and set of CRDs developed under SIG Apps. It introduces a declarative API specifically designed for the workload pattern that AI agents require: stateful, singleton, idle-heavy environments with stable identity and lifecycle controls.

The project introduces three core resources:

- Sandbox CRD – A single, stateful pod with a stable hostname and network identity, persistent storage that survives restarts, and lifecycle controls covering creation, scheduled deletion, pausing, and resuming.

- SandboxTemplate – Reusable templates for creating Sandboxes with predefined security contexts, resource limits, and runtime configurations. Defines guardrails as code.

- SandboxWarmPool – A pool of pre-warmed Sandbox pods. When a new sandbox is requested, the controller claims from the warm pool rather than creating one from scratch, eliminating cold start latency.

The project supports gVisor and Kata Containers as isolation backends, configured via runtimeClassName. It is designed to be backend-agnostic. As of April 2026, the project is in active development and is not yet production-ready for all workloads.

The Agent Sandbox project provides a better abstraction layer on top of Kubernetes primitives. It does not replace the operational burden of running the stack underneath it. You still need to configure and maintain Kata Containers or gVisor on your cluster, manage the RuntimeClass configurations, operate the controller, handle networking policies, manage secrets injection, wire in observability, and deal with the operational complexity of running microVMs on Kubernetes at scale.

For platform teams that want to adopt the Agent Sandbox abstraction but do not have the capacity to build the isolation layer underneath it, the gap between the CRD and a production deployment remains significant.

Northflank provides the full sandbox infrastructure stack on top of Kubernetes, including the isolation layer, orchestration, networking, secrets management, and observability that enterprises need to run AI agent sandboxes in production.

Northflank supports Kata Containers with Cloud Hypervisor, Firecracker, and gVisor per workload. Every sandbox runs in its own microVM with a dedicated kernel. The orchestration, bin-packing, autoscaling, and microVM lifecycle management are handled by the platform. You get the security model of the Agent Sandbox project without building and operating the underlying infrastructure stack yourself.

For enterprises already on Kubernetes, Northflank BYOC deploys the platform into your existing EKS, GKE, AKS, or bare-metal Kubernetes cluster self-serve. Northflank manages the sandbox infrastructure layer on your cluster while your data never leaves your own VPC. This means you keep your existing Kubernetes investment, your compliance posture, and your cloud billing relationships while gaining production-grade sandbox isolation without the engineering overhead.

cto.new migrated their entire sandbox infrastructure to Northflank in two days and went from unworkable provisioning to thousands of daily deployments for untrusted code with linear, per-second billing. That is what production sandbox infrastructure on Kubernetes looks like when you do not build it yourself.

Pricing: $0.01667/vCPU-hour and $0.00833/GB-hour, billed per second. BYOC deployments bill against your own cloud account.

Get started on Northflank (self-serve, no demo required). Or book a demo to walk through how Northflank fits your Kubernetes environment.

A standard Pod uses container isolation, which shares the host kernel. For AI agents that execute untrusted or LLM-generated code, kernel sharing introduces security risk. You also need a stable identity, persistent storage, lifecycle controls like pause and resume, and management at scale, none of which a raw Pod provides cleanly.

Install Kata Containers on your cluster and create a RuntimeClass resource that references the Kata handler. Pods that specify that RuntimeClass will run inside a Kata microVM with a dedicated kernel. gVisor follows the same pattern using the runsc runtime. Both require node-level configuration and ongoing maintenance.

Yes. Northflank BYOC deploys into your existing EKS, GKE, AKS, or bare-metal Kubernetes cluster, self-serve. Northflank manages the sandbox infrastructure layer on your cluster while your data stays in your own VPC.

Northflank supports Kata Containers with Cloud Hypervisor, Firecracker, and gVisor, applied per workload based on your threat model. Every sandbox runs in its own microVM with a dedicated kernel. You choose the isolation model. Northflank handles the configuration and operational complexity underneath.

Yes. Northflank runs sandboxes alongside managed databases (PostgreSQL, MySQL, MongoDB, Redis), background workers, APIs, CI/CD pipelines, and GPU workloads in the same control plane. You do not need a separate infrastructure stack for each workload type.

Kubernetes is the right foundation for running AI agent sandboxes at enterprise scale. It provides scheduling, networking, storage orchestration, and horizontal scalability. What it does not provide out of the box is the isolation model, lifecycle management, and operational tooling that AI agent workloads specifically require.

The Kubernetes Agent Sandbox project is moving in the right direction by formalizing the workload abstraction. But the operational gap between installing the CRD and running production sandbox infrastructure at scale is real. Northflank closes that gap. You get production-grade microVM isolation on top of Kubernetes, self-serve BYOC into your existing cluster, and a full-stack control plane, without spending months building and maintaining the infrastructure layer yourself.

Sign up for free or book a demo to see how Northflank runs AI agent sandboxes on your Kubernetes infrastructure.

- Agent Sandbox on Kubernetes: how it works and how to run it in production: A detailed look at the kubernetes-sigs/agent-sandbox project, its CRDs, isolation backends, and operational reality.

- How to sandbox AI agents: microVMs, gVisor, and isolation strategies: How to choose the right isolation technology for AI agent workloads based on threat model and performance requirements.

- Kata Containers vs Firecracker vs gVisor: A comparison of microVM and isolation technologies covering security model, performance, and when to use each on Kubernetes.

- What is sandbox infrastructure?: The full stack required to run isolated workloads safely at scale, beyond the isolation layer itself.