What are Kata Containers?

Kata Containers is an open-source container runtime that runs workloads inside lightweight virtual machines rather than standard containers, while integrating with the same container tooling engineers already use. From Kubernetes' perspective, a Kata-backed workload looks like a standard container. Under the hood, each workload gets its own dedicated kernel and hardware-enforced isolation boundary.

This article covers how Kata Containers works, what its components are, how it compares to standard containers and alternative isolation approaches, and when it is the right tool.

- Kata Containers is an open-source container runtime that runs workloads inside lightweight VMs, providing hardware-level isolation while integrating natively with Docker and Kubernetes

- It is an orchestration framework, not itself a VMM. It supports Cloud Hypervisor, Firecracker, and QEMU as interchangeable backends

- Each workload gets its own dedicated guest kernel, enforced by KVM hardware virtualisation

- It is an OpenInfra Foundation project, combining the former Intel Clear Containers and runV projects

Northflank is a full-stack cloud platform that uses Kata Containers with Cloud Hypervisor as its primary approach for microVM isolation in production, alongside Firecracker and gVisor depending on workload requirements. In production since 2021 across startups, public companies, and government deployments. Get started (self-serve) or book a session with an engineer for specific infrastructure or compliance requirements.

Kata Containers is an open-source project that builds lightweight virtual machines that integrate with the container ecosystem. It is maintained under the OpenInfra Foundation and supports multiple VMM backends, including Cloud Hypervisor, Firecracker, and QEMU.

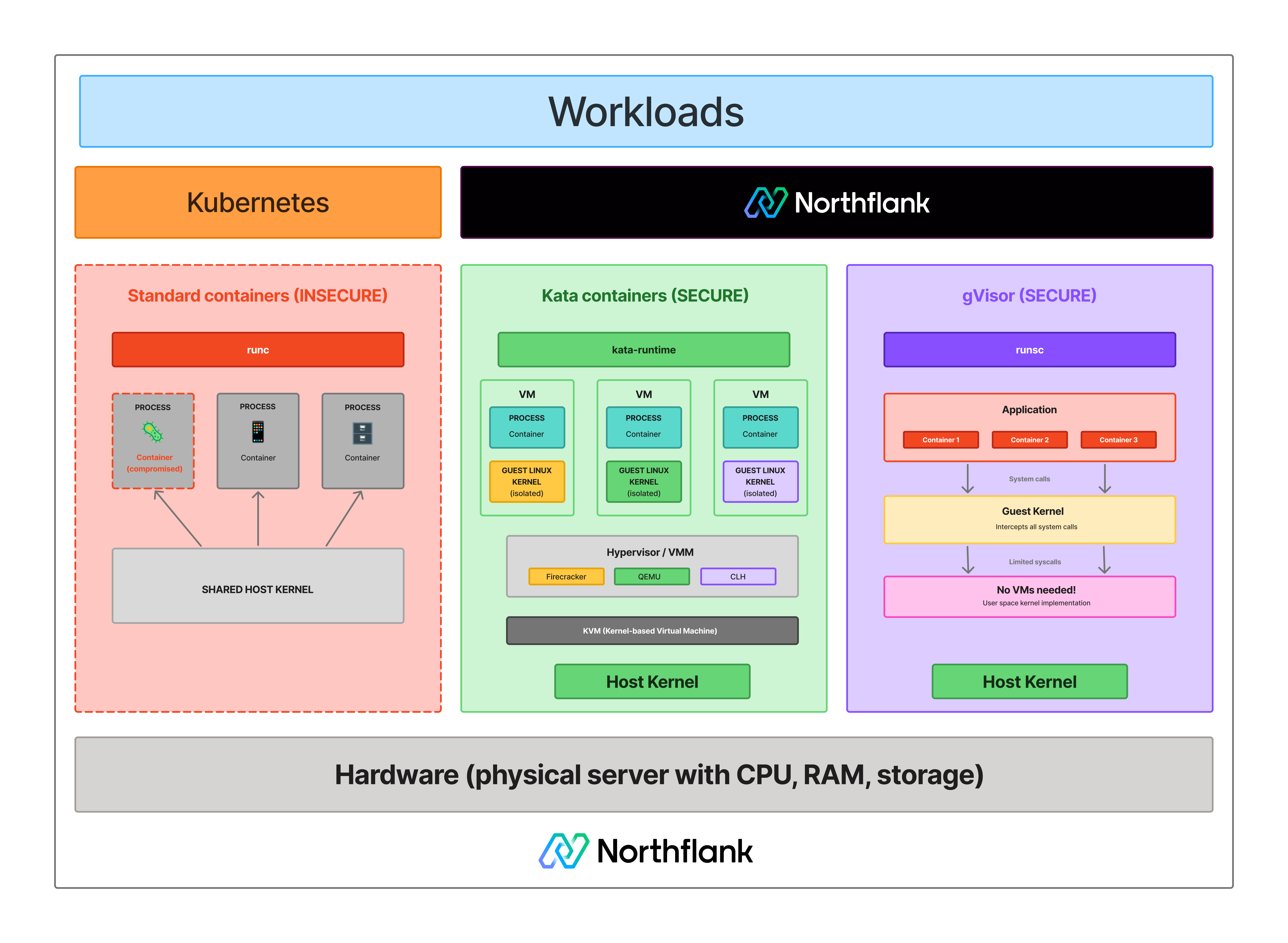

The core idea is straightforward. Standard containers share the host kernel. Kata Containers gives each workload its own guest kernel inside a lightweight VM, enforced by hardware virtualisation via KVM. The workload is isolated at the hardware level, not just the OS level. From the perspective of Kubernetes or Docker, the workload looks and behaves like a standard container. The isolation model underneath is fundamentally different.

Kata Containers is not itself a VMM. It is the orchestration layer that sits on top of Firecracker, Cloud Hypervisor, or QEMU and makes microVMs work natively with container tooling. For a broader explanation of what a microVM is and how KVM underpins it, see What is a microVM? and What is KVM?.

Standard containers use Linux namespaces and cgroups to isolate processes from each other. They share the host kernel. Every syscall a containerised workload makes goes directly to the same kernel that every other container on that host is using. A kernel vulnerability exploited by one workload can affect the host and everything else running on it.

For workloads you control and trust, that tradeoff is acceptable. For untrusted code, AI-generated outputs, customer-submitted scripts, or any multi-tenant environment where different users share infrastructure, the shared kernel is the attack surface. See Containers vs virtual machines for a full breakdown of the isolation tradeoffs.

See how isolation models compare across standard containers, Kata Containers, and gVisor:

When Kubernetes schedules a pod using Kata Containers, instead of starting a container process directly on the host, the Kata runtime provisions a lightweight VM. That VM boots a minimal guest kernel and starts the workload inside it. Networking and storage are connected through virtualised interfaces.

The key components are:

- The Kata runtime (

kata-runtime): The CRI-compatible runtime that Kubernetes talks to. It receives pod scheduling requests and manages the VM lifecycle. - The VMM backend: Kata supports Cloud Hypervisor (default, best performance for cloud workloads), Firecracker (minimal device model, fast boot), and QEMU (broadest hardware compatibility). The VMM creates and manages the actual VM. See What is AWS Firecracker? for a technical breakdown of Firecracker's architecture.

- The guest kernel: A minimal Linux kernel that boots inside the VM. Each workload gets its own. This is the fundamental property that distinguishes Kata from standard containers.

- The Kata agent: A process running inside the VM that communicates with the Kata runtime on the host. It manages workload execution inside the VM on behalf of the runtime.

Boot time is in the range of 150 to 300ms, depending on VMM and configuration, reflecting the guest kernel boot overhead on top of the VMM startup.

See how Kata Containers compares across isolation, performance, and operational complexity:

| Standard container | Kata Containers | MicroVM (direct) | |

|---|---|---|---|

| Isolation model | OS-level (namespaces, cgroups) | Hardware-level (KVM via VMM) | Hardware-level (KVM via VMM) |

| Kernel | Shared host kernel | Dedicated guest kernel | Dedicated guest kernel |

| Boot time | Milliseconds | ~150ms to ~300ms depending on VMM | ~125ms to ~300ms depending on VMM |

| Memory overhead | Minimal | Low | Less than 5 to 10 MiB |

| Kubernetes integration | Native | Native via CRI / RuntimeClass | Via Kata Containers |

| Orchestration included | Yes (Kubernetes) | Yes | No |

| Best for | Trusted internal workloads | Untrusted or multi-tenant workloads on Kubernetes | Custom serverless platforms |

The distinction between Kata Containers and a raw microVM like Firecracker is mostly operational. Firecracker provides the isolation primitive. Kata Containers provides the orchestration layer that makes it work at scale in Kubernetes without building custom infrastructure. Most teams that want microVM isolation in Kubernetes use Kata rather than managing Firecracker or Cloud Hypervisor directly. See Firecracker vs Docker for context on why microVM isolation matters over standard containers.

Kata Containers is designed to work with multiple VMM backends, giving teams flexibility depending on their infrastructure and requirements.

- Cloud Hypervisor is the default and primary backend for most production use cases. It is a Rust-based VMM maintained by the Linux Foundation, targeting modern cloud workloads with support for GPU passthrough and live migration while keeping a small, auditable codebase.

- Firecracker is the AWS-built VMM optimised for minimal overhead, with approximately 125ms boot time to userspace and less than 5 MiB of memory overhead per instance in benchmarks. It is a good fit for environments where boot speed and density matter most.

- QEMU offers the broadest hardware compatibility of the three. It carries more overhead than Firecracker or Cloud Hypervisor but is the right choice when hardware compatibility is the primary requirement.

The VMM can be selected per workload or configured as the cluster default via RuntimeClass in Kubernetes.

Kubernetes schedules workloads through the Container Runtime Interface. By default, containerd or CRI-O handle that interface and run standard containers. Kata Containers implements the same CRI, so Kubernetes can schedule workloads into Kata VMs without any changes to the control plane.

You create a RuntimeClass resource pointing to the Kata runtime handler and reference it in your pod spec. Pods assigned that RuntimeClass are scheduled into Kata-backed VMs. Pods without it run as standard containers. Both can run on the same cluster simultaneously.

This makes Kata the practical path to hardware-level isolation for teams already running Kubernetes, without replacing their existing orchestration setup.

Understanding where Kata Containers fits also means understanding where it does not.

- Boot overhead: Each workload boots a guest kernel, adding 150 to 300ms compared to millisecond container startup. For workloads that need to start instantly, that overhead matters.

- Resource overhead: Running a guest kernel and VMM per workload uses more memory than a standard container, though modern VMM designs keep this in single-digit MiB.

- Not all Kubernetes features work identically: Some features that rely on direct access to host namespaces or specific kernel capabilities behave differently inside a Kata VM. Testing your specific workload is important before moving to production.

- Nested virtualisation requirements: Running Kata on a cloud VM requires the cloud provider to support nested virtualisation on that instance type. Not all providers or instance types support this. gVisor is a practical alternative in those environments. See What is gVisor?

Kata Containers is a good fit when:

- You need microVM isolation in Kubernetes without building orchestration from scratch. Kata handles VM lifecycle, networking, and CRI integration. You bring the Kubernetes cluster.

- You are running untrusted or multi-tenant workloads. AI-generated code execution, customer-submitted scripts, or any environment where different users share infrastructure benefit from hardware-enforced kernel boundaries.

- You want to choose your VMM. The ability to swap between Cloud Hypervisor, Firecracker, and QEMU based on workload requirements gives flexibility that raw VMM deployments do not.

- You are already running Kubernetes. The RuntimeClass integration means adding Kata isolation to an existing cluster is an incremental change, not a rearchitecture.

When Firecracker directly is the better choice: if you are building custom serverless infrastructure and have the expertise to manage VMM orchestration, image builds, networking, and lifecycle management yourself, Firecracker gives you lower-level control. See Kata Containers vs Firecracker vs gVisor for a detailed comparison.

Northflank uses Kata Containers with Cloud Hypervisor as its primary approach for microVM isolation, with Firecracker and gVisor applied depending on workload requirements.

The platform has been in production since 2021 across startups, public companies, and government deployments. Sandboxes spin up in approximately 1 to 2 seconds, with compute pricing starting at $0.01667 per vCPU per hour and $0.00833 per GB of memory per hour. See the pricing page for full details.

Northflank supports both ephemeral and persistent sandbox environments on managed cloud or inside your own VPC, self-serve into AWS, GCP, Azure, Oracle, CoreWeave, Civo, on-premises, or bare-metal via bring your own cloud.

Get started with Northflank sandboxes

- Sandboxes on Northflank: overview and concepts: architecture overview and core sandbox concepts

- Deploy sandboxes on Northflank: step-by-step deployment guide: step-by-step deployment guide

- Deploy sandboxes in your cloud: BYOC deployment guide: run sandboxes inside your own VPC

- Create a sandbox with the SDK: programmatic sandbox creation: programmatic sandbox creation via the Northflank JS client

Get started (self-serve), or book a session with an engineer if you have specific infrastructure or compliance requirements.

No. Docker containers share the host kernel and use namespaces and cgroups for isolation. Kata Containers run each workload inside a lightweight VM with a dedicated guest kernel enforced by hardware virtualisation. From Docker or Kubernetes' perspective, the interface is the same. The isolation model underneath is fundamentally different.

No. Kata Containers is an orchestration framework that sits on top of a VMM. It supports Cloud Hypervisor, Firecracker, and QEMU as backends. The VMM creates and manages the actual VM. Kata manages the integration with container tooling and handles the runtime lifecycle.

Yes. Kata Containers implements the Container Runtime Interface, so it integrates natively with Kubernetes via RuntimeClass. You can run Kata-backed pods and standard container pods on the same cluster simultaneously.

Firecracker is a VMM that creates microVMs. Kata Containers is an orchestration framework that can use Firecracker as one of its VMM backends. You can run Kata Containers with Firecracker, Cloud Hypervisor, or QEMU. Firecracker alone does not include the orchestration layer needed to integrate with Kubernetes.

Kata Containers runs each workload in a lightweight VM with a dedicated guest kernel, providing hardware-enforced isolation via KVM. gVisor intercepts syscalls in user space through its Sentry component without booting a VM. Kata provides stronger isolation for adversarial workloads. gVisor has lower overhead and works on hosts where nested virtualisation is unavailable. See What is gVisor? for a full explanation.

Cloud Hypervisor is the default and the right choice for most production use cases. Use Firecracker if boot speed and density are the primary requirements. Use QEMU if you need maximum hardware compatibility. The VMM can be configured per RuntimeClass in Kubernetes, so different workloads can use different backends on the same cluster.

- What is a microVM?: how microVMs work, which technologies implement them, and where Kata Containers fits in the stack

- What is KVM?: the hardware virtualisation layer that Kata Containers builds on

- Kata Containers vs Firecracker vs gVisor: a detailed comparison of the three leading isolation technologies

- What is AWS Firecracker?: a technical breakdown of Firecracker's architecture and how Kata uses it as a backend

- What is gVisor?: how gVisor compares to Kata Containers and when to use each

- Firecracker vs Docker: how microVM isolation compares to standard container isolation

- Containers vs virtual machines: the broader comparison covering containers, VMs, and where Kata Containers fits

- How to spin up a secure code sandbox and microVM in seconds with Northflank: a step-by-step guide to deploying Kata-backed workloads on Northflank