Cloud Hypervisor vs gVisor

Cloud Hypervisor and gVisor both contribute to the same goal: running workloads with stronger isolation than standard containers provide. They take fundamentally different approaches to get there, and understanding the difference matters before choosing between them or deciding to use both.

This article compares Cloud Hypervisor and gVisor on architecture, isolation model, performance, infrastructure requirements, and use case fit.

What is Northflank?

Northflank is a full-stack cloud platform that uses Cloud Hypervisor as its primary VMM for microVM-backed sandboxes, with gVisor applied where syscall-interception isolation is sufficient or where nested virtualisation is unavailable. In production since 2021 across startups, public companies, and government deployments. Get started (self-serve) or book a session with an engineer for specific infrastructure or compliance requirements.

| Cloud Hypervisor | gVisor | |

|---|---|---|

| Type | Virtual Machine Monitor (VMM) | Application kernel (syscall interception) |

| Isolation model | Hardware-level (KVM or MSHV) | Syscall interception (user-space kernel) |

| Kernel | Dedicated guest kernel per VM | No dedicated kernel (Sentry handles syscalls) |

| Hardware virtualisation required | Yes (KVM or MSHV) | No (Systrap) / Optional (KVM mode) |

| Boot time | Less than 100ms to userspace | Milliseconds |

| Guest OS support | 64-bit Linux, Windows 10, Windows Server 2019 | Linux only |

| Live migration | Supported | Not applicable |

| GPU passthrough | Supported via VFIO | Not supported |

| Kubernetes integration | Via Kata Containers / RuntimeClass | Via RuntimeClass (runsc) |

| Operational complexity | Higher (requires Kata for container workflows) | Lower (drop-in OCI runtime) |

| Best for | Hardware-enforced isolation, GPU workloads, Windows guests | Enhanced container security, no nested virtualisation |

Cloud Hypervisor is an open-source Virtual Machine Monitor written in Rust that runs on top of KVM and Microsoft Hypervisor (MSHV). It is governed under the Linux Foundation and based on the rust-vmm crates. The project focuses on running modern cloud workloads with minimal hardware emulation, targeting low latency, low memory overhead, and a small attack surface.

Unlike QEMU, which supports a wide range of hardware for general-purpose virtualisation, Cloud Hypervisor exclusively targets modern cloud workloads. It uses paravirtualised devices (virtio) throughout and requires no legacy device support. It supports x86-64 and AArch64 architectures and runs 64-bit Linux and Windows 10/Windows Server 2019 guests.

Key capabilities include: boot to userspace in less than 100ms, live migration, CPU and memory hotplug, GPU passthrough via VFIO, and a REST API for programmatic VM lifecycle management. See What is a microVM? and What are Kata Containers? for context on how it fits in the stack.

For a full technical breakdown of Cloud Hypervisor's architecture and capabilities, see the guide to Cloud Hypervisor.

gVisor is an open-source application kernel developed by Google that sandboxes containers by intercepting system calls in user space. Its core component, the Sentry, handles syscalls on behalf of the sandboxed workload without passing them to the host kernel. The Sentry is written in Go, a memory-safe language.

gVisor is not a VMM and not a VM. It does not boot a dedicated guest kernel per workload. In its default Systrap mode, it requires no hardware virtualisation. In KVM mode, it uses virtualisation hardware for address space isolation, but the sandbox retains a process model rather than booting a full guest OS. It ships an OCI-compatible runtime called runsc that integrates directly with Docker, containerd, and Kubernetes. See What is gVisor? for a full technical breakdown.

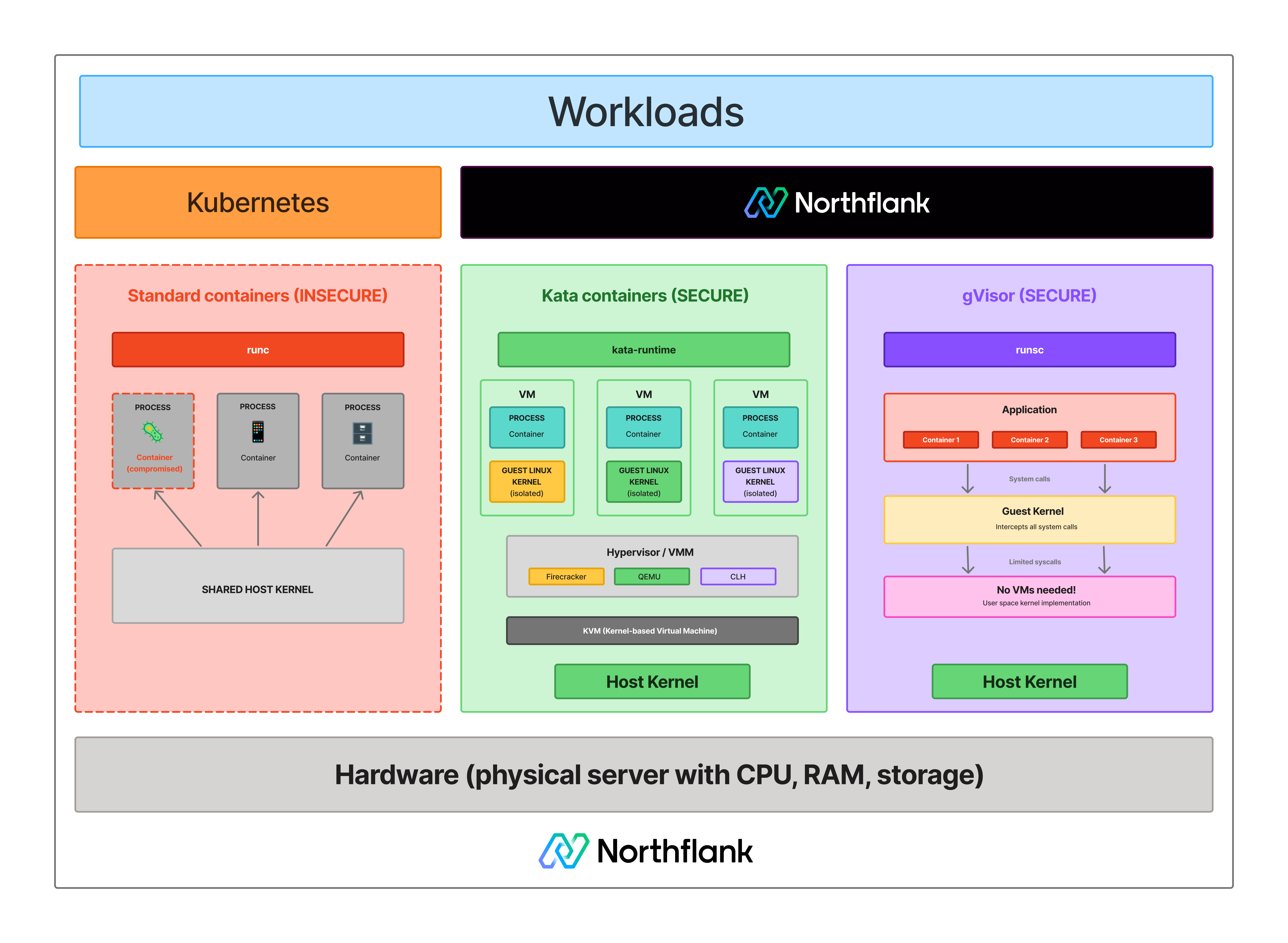

Cloud Hypervisor is a VMM. It creates and manages virtual machines. On its own, it does not integrate with container tooling and needs Kata Containers to act as the orchestration layer that bridges it to Kubernetes and Docker workflows. gVisor is an OCI-compatible container runtime that drops into existing container workflows directly.

This means the comparison is not purely symmetrical. When people ask "Cloud Hypervisor vs gVisor", they are usually asking: should I use hardware-enforced VM isolation via Cloud Hypervisor and Kata Containers, or syscall-interception isolation via gVisor, for my containerised workloads? That is the question this article answers.

Cloud Hypervisor enforces isolation at the hardware level. Each workload boots its own dedicated Linux kernel inside a VM boundary enforced by KVM or MSHV hardware. To escape, an attacker must first compromise the guest kernel, then escape the hypervisor layer enforced by CPU hardware. Those are two separate hardware-enforced barriers.

gVisor enforces isolation at the syscall level. The Sentry intercepts syscalls and handles them in user space, so the workload never reaches the host kernel directly. In Systrap mode, there is no hardware-enforced boundary. In KVM mode, virtualisation hardware is used for address space isolation, but the sandbox does not provide a dedicated guest kernel per workload.

For actively adversarial workloads, Cloud Hypervisor via Kata Containers provides the stronger isolation guarantee. For workloads that need meaningfully stronger isolation than standard containers without the overhead or KVM requirements of microVMs, gVisor is a practical middle ground.

See how the isolation models compare:

- Boot time: Cloud Hypervisor boots to userspace in less than 100ms. Kata Containers adds orchestration overhead on top, putting end-to-end sandbox startup in the 150 to 300ms range, depending on configuration. gVisor starts in milliseconds with no kernel boot. For high-frequency, short-lived workloads, gVisor's startup advantage is real.

- I/O overhead: gVisor's syscall interception adds latency on I/O-heavy workloads. Benchmarks suggest 10 to 30% slower than native containers depending on workload type. Cloud Hypervisor workloads run near-native I/O because the guest kernel handles syscalls directly without an interception layer. For databases, high-throughput file processing, or network-intensive workloads, Cloud Hypervisor has a performance advantage.

- CPU-bound workloads: For CPU-bound workloads with low syscall frequency, gVisor's overhead is minimal. The syscall tax primarily affects high-frequency syscall workloads.

- GPU passthrough: Cloud Hypervisor supports GPU passthrough via VFIO, making it suitable for GPU workloads that need hardware-level isolation. gVisor does not support GPU passthrough.

- Windows guests: Cloud Hypervisor supports Windows 10 and Windows Server 2019 as guest operating systems. gVisor runs Linux workloads only.

Cloud Hypervisor requires KVM or MSHV. The host must support Intel VT-x or AMD-V with KVM available, or run on a host with Microsoft Hypervisor support. On cloud instances, the provider must support nested virtualisation for that instance type. Running it in Kubernetes also requires Kata Containers for orchestration. See What is KVM? for the hardware layer details.

gVisor's Systrap mode requires no hardware virtualisation. It runs on any Linux host. This makes it the practical choice when nested virtualisation is unavailable. Its KVM mode optionally uses virtualisation hardware but does not require it.

For Kubernetes integration, both use RuntimeClass. Kata Containers provides the runtime handler for Cloud Hypervisor-backed workloads. gVisor provides runsc as its handler. Both can run alongside standard container pods on the same cluster without control plane changes.

Cloud Hypervisor runs a full Linux guest kernel per workload. Syscall compatibility is not a concern; any Linux workload runs inside a Cloud Hypervisor VM without modification. Windows guest support adds further compatibility for teams running mixed workloads.

gVisor's Sentry re-implements Linux system interfaces but does not cover every syscall. Applications that depend on less common or recently added syscalls may not behave correctly under gVisor. Testing your specific workload before deploying to production matters. For workloads with unusual syscall requirements, Cloud Hypervisor is the safer choice.

Use Cloud Hypervisor (via Kata Containers) when:

- Your threat model involves actively adversarial workloads requiring hardware-enforced isolation

- You are running untrusted code at scale (AI-generated outputs, customer-submitted scripts, multi-tenant platforms)

- Your workloads are I/O-heavy and near-native performance is a requirement

- You need GPU passthrough for isolated GPU workloads

- You need to run Windows guests

- Your workloads have syscall requirements gVisor does not cover

- KVM or MSHV is available on your host

Use gVisor when:

- Nested virtualisation is unavailable on your host

- You want stronger isolation than standard containers without microVM overhead or operational complexity

- Your workloads start and stop frequently and millisecond startup matters

- Your workloads are CPU-bound with low syscall frequency

- You want a simpler integration path with existing Docker and Kubernetes workflows

- Defence-in-depth is the goal rather than maximum isolation strength

Yes. Cloud Hypervisor and gVisor complement rather than compete. Northflank uses Cloud Hypervisor as the primary VMM for microVM-backed workloads via Kata Containers, with gVisor applied where syscall-interception isolation is sufficient or where nested virtualisation is unavailable on the host. The isolation technology is applied based on workload requirements rather than a single approach for everything.

Northflank's sandbox infrastructure uses Kata Containers with Cloud Hypervisor as its primary VMM, with gVisor applied for workloads where syscall-interception isolation is sufficient or where nested virtualisation is unavailable. Firecracker is also applied for workloads that benefit from its minimal device model. The platform has been in production since 2021 across startups, public companies, and government deployments.

Sandboxes spin up in approximately 1 to 2 seconds, with compute pricing starting at $0.01667 per vCPU per hour and $0.00833 per GB of memory per hour. See the pricing page for full details.

Northflank supports both ephemeral and persistent sandbox environments on managed cloud or inside your own VPC, self-serve into AWS, GCP, Azure, Oracle, CoreWeave, Civo, on-premises, or bare-metal via bring your own cloud.

Get started with Northflank sandboxes

- Sandboxes on Northflank: overview and concepts: architecture overview and core sandbox concepts

- Deploy sandboxes on Northflank: step-by-step deployment guide: step-by-step deployment guide

- Deploy sandboxes in your cloud: BYOC deployment guide: run sandboxes inside your own VPC

- Create a sandbox with the SDK: programmatic sandbox creation: programmatic sandbox creation via the Northflank JS client

Get started (self-serve), or book a session with an engineer if you have specific infrastructure or compliance requirements.

No. Cloud Hypervisor is a VMM (the software that creates and manages virtual machines). A microVM is the lightweight virtual machine it creates. Cloud Hypervisor is to a microVM what QEMU is to a traditional VM. See What is a microVM? for the distinction.

Cloud Hypervisor runs on KVM or Microsoft Hypervisor (MSHV). It requires one of these hypervisor backends. It does not run on hosts without hardware virtualisation support the way gVisor's Systrap mode does.

It depends on your threat model and infrastructure. For workloads where syscall-interception isolation is sufficient and hardware virtualisation is unavailable, gVisor is a practical alternative. For actively adversarial workloads where hardware-enforced boundaries are required, or for GPU and Windows workloads, Cloud Hypervisor via Kata Containers provides capabilities gVisor does not.

No. Cloud Hypervisor is a VMM and requires an orchestration layer to integrate with Kubernetes. Kata Containers provides that layer via the Container Runtime Interface. See What are Kata Containers? for how that integration works.

Both are Rust-based VMMs that use KVM for hardware isolation. Cloud Hypervisor targets a broader range of cloud workloads with features including live migration, GPU passthrough, Windows guest support, CPU and memory hotplug, and a REST API. Firecracker prioritises a minimal device model for maximum simplicity and density. Both are supported as VMM backends in Kata Containers. See What is AWS Firecracker? for Firecracker's architecture.

Both are VMMs that use KVM. QEMU supports a wide range of hardware architectures and legacy devices for general-purpose virtualisation. Cloud Hypervisor exclusively targets modern cloud workloads using paravirtualised virtio devices, no legacy hardware, and 64-bit guests only. Cloud Hypervisor's narrower scope results in a smaller codebase and attack surface.

- Guide to Cloud Hypervisor: a full technical guide to Cloud Hypervisor's architecture, capabilities, and how to run it in production

- What are Kata Containers?: how Kata Containers orchestrates Cloud Hypervisor for Kubernetes workloads

- What is gVisor?: how gVisor works, its components, execution platforms, and limitations

- What is a microVM?: how microVMs work and how Cloud Hypervisor implements them

- What is KVM?: the hardware virtualisation layer Cloud Hypervisor builds on

- Kata Containers vs gVisor: how Kata Containers and gVisor compare for Kubernetes workload isolation

- MicroVM vs gVisor: the broader comparison of hardware-enforced and syscall-interception isolation

- Kata Containers vs Firecracker vs gVisor: a three-way comparison of the leading isolation technologies

- What is AWS Firecracker?: how Firecracker compares to Cloud Hypervisor as a VMM backend

- Firecracker vs gVisor: a focused comparison of Firecracker against gVisor