![Header image for blog post: Ephemeral sandbox environments [2026 guide]](https://assets.northflank.com/ephemeral_sandbox_environments_29925c1594.png?auto=avif&quality=100&width=937)

Ephemeral sandbox environments [2026 guide]

Ephemeral sandbox environments have become a core part of how you ship software and run AI agent workloads safely.

This article covers how they work, the main isolation models, key considerations for production use, and how platforms like Northflank can simplify running them at scale.

Ephemeral sandbox environments are short-lived, isolated execution contexts that spin up on demand and are destroyed once their purpose is served. They replace long-lived shared test environments with per-task, per-PR, or per-request environments that start clean every time and leave no lingering state.

Three variables determine if your ephemeral sandbox strategy works in production:

- How deep your isolation needs to be versus how fast environments need to start up.

- How closely environments need to match production versus what that costs at scale.

- How much of the lifecycle you can automate versus the operational overhead that introduces.

The right isolation model depends on your threat model, beyond latency alone.

Platforms like Northflank provide both ephemeral and persistent sandbox infrastructure with microVM-based isolation (Firecracker, gVisor, Kata), bring-your-own-cloud support, and environment creation in roughly 1-2 seconds, letting you run sandboxed workloads inside your own VPC rather than a third-party managed cloud. Northflank is used across a range of organisations, from early-stage startups to public companies and government deployments.

An ephemeral sandbox environment is an isolated runtime that you create, use, and destroy within a defined lifecycle, typically triggered by a specific event like a pull request, a function call, or an agent task. Unlike persistent environments, ephemeral sandboxes carry no long-term state and impose no cleanup burden on your team.

The "sandbox" refers to the isolation model: code running inside the environment has no access to external systems, other tenants' data, or your production infrastructure unless you explicitly allow it. The "ephemeral" part means the environment exists only as long as it needs to.

In practice, you'll encounter ephemeral sandbox environments in two contexts:

- Development and testing: Preview environments, per-PR deployments, integration test runners, and short-lived staging replicas.

- Code execution for AI: Running LLM-generated or agent-authored code in isolated runtimes where the code cannot be trusted by default.

Both use cases share the same infrastructure requirements: fast creation, deep isolation, predictable resource usage, and reliable teardown. Where they diverge is in isolation depth and latency requirements.

For a deeper look at how preview environments work in practice, see the what and why of ephemeral preview environments on Kubernetes.

In DevOps, "ephemeral" refers to infrastructure with a lifecycle tied to a specific task rather than a calendar. You create an environment when you need it, it runs for a defined duration, and it's destroyed automatically when the task is complete.

This contrasts with the traditional model of maintaining a small number of long-lived shared environments (dev, QA, staging, production). Those environments accumulate stale state, become configuration drift hazards, and create bottlenecks when multiple developers need to test simultaneously.

Ephemeral environments solve the bottleneck problem by making environment creation cheap enough to do per-PR or per-request. The trade-off is that creation time and infrastructure overhead now become variables you need to optimize actively.

There is no single implementation model for ephemeral sandboxes. The right approach depends on your workload type, your isolation requirements, and your existing infrastructure. Here are the four primary models in use in 2026.

Containers using Linux namespaces and cgroups are the default starting point for most teams: fast to create, cheap to run, and compatible with existing Kubernetes clusters. The limitation is kernel sharing. All containers on a host share the same OS kernel, so a kernel vulnerability can break isolation entirely. Use this model only for internal workflows where the code running inside is trusted.

MicroVMs sit between containers and full VMs, giving each sandbox its own kernel boundary without the startup overhead of a full VM. The three runtimes you'll encounter most:

- Firecracker: lightweight VM via KVM, designed for serverless and multi-tenant workloads.

- gVisor: runs a userspace kernel that handles syscalls from guest applications, reducing the attack surface on the host kernel.

- Kata Containers: OCI containers inside lightweight VMs, compatible with existing container tooling.

This is the current standard for running untrusted or AI-generated code at scale.

Full VMs give each sandbox a separate guest OS and kernel. Reserve this for malware analysis or compliance workloads requiring complete kernel-level separation. Startup times and memory overhead make it impractical at scale.

Each pull request or feature branch gets a complete, production-like deployment: services, databases, networking, and configuration, spun up automatically and torn down on merge or close. Teams running microservices architectures often need 10-30 services per environment, which is where lifecycle management becomes non-trivial fast.

Run ephemeral sandbox environments on Northflank

Northflank supports all four models above, from container-based preview environments to microVM-isolated code execution, with both ephemeral and persistent modes, BYOC support, and environment creation in roughly 1-2 seconds.

Get started with Northflank or schedule a demo.

Go deeper:

The right model depends on what you're protecting against and what latency you can tolerate. Here's how they compare at a glance:

| Model | Isolation boundary | Best for | Key limitation |

|---|---|---|---|

| Containers | Shared kernel (namespaces + cgroups) | Trusted internal code, dev workflows | Kernel vulnerability breaks isolation |

| gVisor | Userspace kernel (syscall interception) | Untrusted code, multi-tenant workloads | Incomplete syscall compatibility with some applications |

| Firecracker microVM | Separate kernel via KVM | AI agent execution, serverless, multi-tenancy | Requires KVM support on host |

| Kata Containers | Separate kernel via lightweight VM | Regulated workloads, OCI-compatible pipelines | Higher per-sandbox overhead than Firecracker |

| Full VM | Separate kernel via hypervisor | Malware analysis, hardware-level compliance | Cost and startup latency make it impractical at scale |

Before you commit to an implementation model, these are the variables that will determine whether your strategy holds up in production.

- Isolation depth: Containers are sufficient for trusted internal code. For AI-generated, third-party, or external user code, you need at minimum gVisor or Firecracker-level isolation.

- Creation latency: Vendors often quote VM boot time, not full end-to-end time, which also includes image pulls, network setup, and service initialization. Know which metric applies to your use case before benchmarking.

- Environment accuracy: Run the same container images, database versions, and configuration as production. A preview environment that omits your background job workers will not catch integration bugs involving those workers.

- Cost controls: Ephemeral environments accrue cost even when idle. Scale-to-zero policies, auto-shutdown timers, and per-environment resource limits are essential, not optional.

- Lifecycle and secrets management: Sandboxes not torn down correctly leave dangling resources and can hold onto sensitive data longer than intended. Each environment also needs the correct secrets for its context. Reusing production secrets in ephemeral environments is a common misconfiguration with real security consequences.

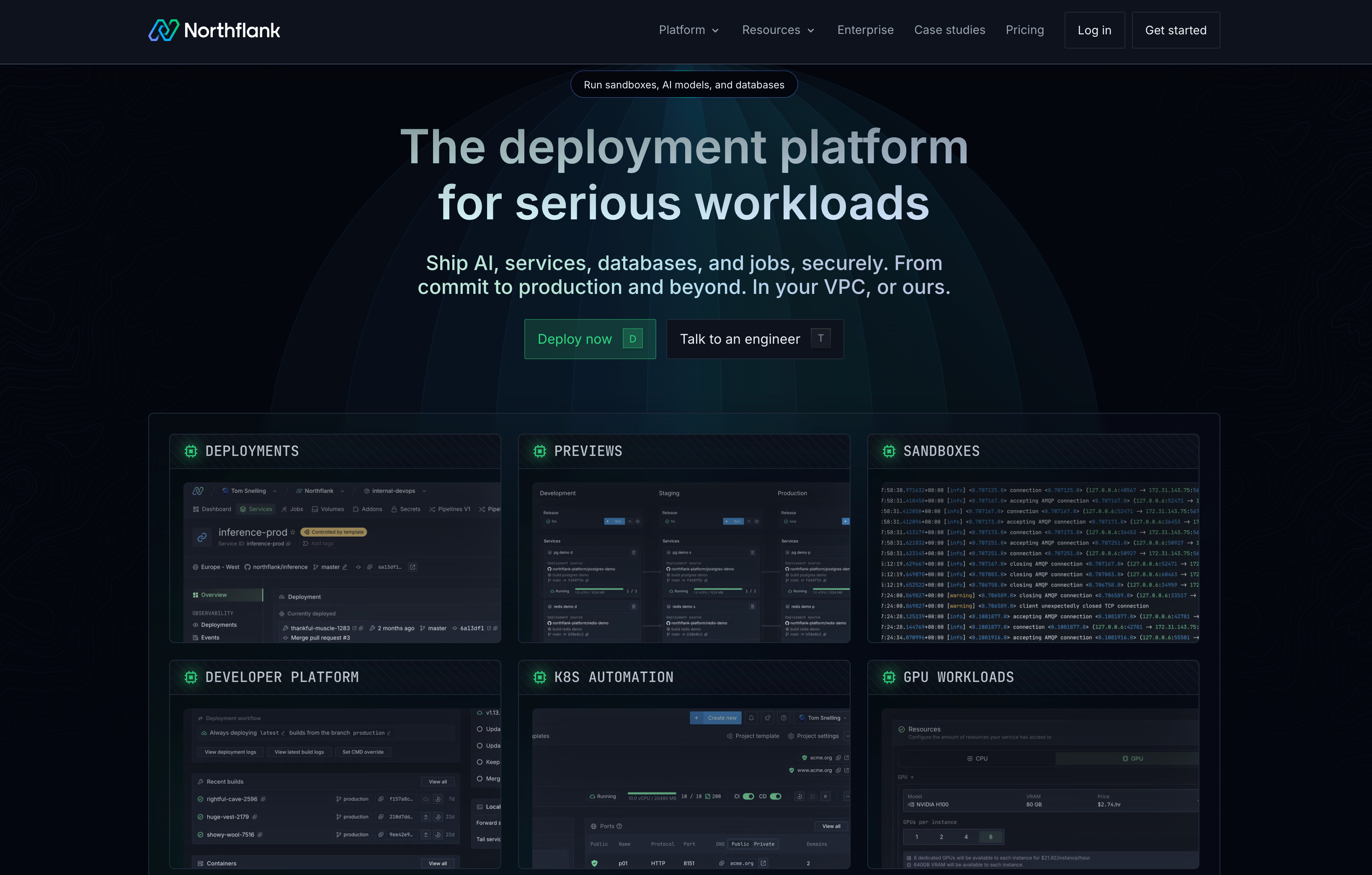

Northflank is a developer platform for running full workload environments at scale, covering services, databases, background jobs, and agents.

Among its features, it includes Sandboxes for running isolated, microVM-backed execution environments and Preview Environments for spinning up full-stack PR-based deployments automatically.

If you need to run ephemeral sandboxes in production, here is what it provides across the full stack:

You can create environments in roughly 1-2 seconds end-to-end, covering the full creation lifecycle including networking and service initialization. You can trigger environments via the API, CLI, or Git integration for PR-based preview environments, and configure automatic teardown based on lifecycle rules you define.

Both ephemeral and persistent modes are supported. Short-lived execution pools handle per-request workloads. Long-running stateful services handle workloads that need to maintain state across sessions.

For workloads requiring deeper isolation, Northflank supports microVM-based runtimes: Firecracker, gVisor, and Kata Containers, selected based on your workload requirements. This makes it practical to run untrusted or AI-generated code safely in production.

For a detailed breakdown of how to configure each runtime, see how to spin up a secure code sandbox and microVM in seconds with Northflank.

Most sandbox platforms host your workloads on their own managed cloud. With Northflank, you can deploy sandbox infrastructure inside your own VPC on AWS, GCP, Azure, or on-premises infrastructure.

This matters if you're in a regulated industry where workloads cannot leave a controlled network boundary, or if you simply prefer to keep compute inside your own infrastructure. BYOC (Bring Your Own Cloud) on Northflank is self-serve.

Your environment is not limited to single containers or functions. You can run agents, workers, APIs, databases, and background jobs together in a single environment, with both CPU and GPU support.

On-demand GPUs are available without quota requests or manual provisioning, which is relevant for AI agent pipelines that require GPU-accelerated inference alongside code execution.

For more on sandboxing AI agent workloads specifically, see code execution environments for autonomous agents and best sandboxes for coding agents.

Usage is billed at $0.01667 per vCPU per hour and $0.00833 per GB of memory per hour, with GPU pricing on the Northflank pricing page. Northflank is used across a range of organisations, from early-stage startups to public companies and government deployments.

Get started with ephemeral sandbox environments

Northflank provides sandbox infrastructure with microVM isolation, BYOC (Bring your own cloud) support, and both ephemeral and persistent execution modes.

Get started with Northflank or schedule a demo.

Related resources:

Ephemeral sandbox environments are temporary, isolated infrastructure instances created on demand for a specific task and destroyed automatically when that task is complete. You'll encounter them in developer workflows (preview environments, integration testing, CI/CD pipelines) and AI agent systems (isolated code execution).

A sandbox environment is any isolated execution context. A preview environment is a specific type of sandbox used in developer workflows: a full-stack deployment created per pull request or branch for testing and stakeholder review. All preview environments are sandboxes, but not all sandboxes are preview environments.

Ephemeral sandboxes are destroyed after use and carry no persistent state. Persistent sandboxes maintain state across sessions, retaining filesystem contents, network identity, and configuration. The right choice depends on whether your workload needs state continuity across multiple interactions.

Yes, but container-level isolation is insufficient for running untrusted AI-generated code. AI agent execution pipelines require microVM-based isolation (Firecracker, gVisor, or Kata Containers) to enforce a meaningful security boundary between generated code and the host system. For more detail, see what is an AI sandbox and best code execution sandboxes for AI agents.

If you want to go deeper on any of the topics covered in this article, these resources are a good next step.

- The what and why of ephemeral preview environments on Kubernetes: how preview environments work in Kubernetes-based stacks and what makes them challenging for full-stack apps.

- What is a sandbox environment?: sandbox isolation models, use cases, and how to choose between them in 2026.

- What is a staging environment and how to set one up: how staging environments differ from ephemeral sandboxes and when you need both.

- Preview environment platforms: a comparison of platforms for running PR-based preview environments at scale.

- Remote code execution sandbox: infrastructure requirements for running code execution sandboxes securely.

- What is an AI sandbox?: what AI sandboxes are and how isolation requirements differ from standard dev environments.

- Best cloud sandboxes: cloud sandbox options for different workload types and use cases.